PurpleTeam alpha (both local and cloud environments) have been released, after several years of hard work, mostly on top of a day job.

This is a very condensed run-down of the process of taking PurpleTeam (a web security regression testing SaaS and CLI) from Proof of Concept (PoC) to Alpha release.

PoC

Q: What were my intentions with creating the original Proof of Concept (PoC), what was I trying to achieve?

A: Elicit Developer feedback, Find out what Developers and their Teams really needed for just in time security regression testing of their web applications and APIs. How to get this process (dynamic security testing) as close as possible to the coding of their applications and APIs

Q: What did I do with the PoC?

A: Took it around the world speaking and running workshops with Developers and their Teams. That’s right, getting this process as close as possible to Developers and their Teams

To name a few such events:

- CHCH.js Meetup 2016

- OWASP Chch Meetup 2016

- OWASP NYC Meetup 2016

- NodeConf EU 2016

- NodeJS Meetup Auckland 2016

- AWS Meetup Auckland 2016

- OWASP NZ Day Auckland 2019

There are many Static Analyse Security Testing (SAST) tools available. As Developers we need both static and dynamic application security testing.

The Proof of Concept I created several years ago was to work out exactly what Developers and their Teams needed in terms of Dynamic Application Security Testing (DAST) capabilities to compliment the many Static Application Security Testing (SAST) tools already available and able to be plugged into or consumed by your CI/build pipelines.

I’ve written extensively in the past on SAST offerings, for example the Web Applications chapter of my 2nd book Holistic Info-Sec for Web Developers covers:

- The perils of consuming free and open source libraries

- Countermeasures to the above perils

- Tooling options for SAST

Journey

If you’re a Developer creating internet facing applications, you know security is something you need to be thinking about right? As Developers we all need as much automated help with improving our AppSec as possible. As we’re creating it, no blockers, just enablers.

Many organisations spend many thousands of dollars on security defect remediation of the software projects they create. Usually this effort is also performed late in the development life-cycle, often even after the code is considered done. This fact makes the remediation effort very costly and often too short. Because of this there are many bugs left in the software that get deployed to production.

PurpleTeam strikes at the very heart of this problem. PurpleTeam is a CLI and back-end/API (SaaS). The CLI can be run manually, but it’s sweet spot is being inserted into Development Team’s build pipelines, where it can find the security defects in your running web applications and APIs, and provide immediate and continuous notification of what and where your security defects are, along with tips on how to fix them.

The PurpleTeam back-end runs smart dynamic application security testing against your web applications or APIs. The purpleteam CLI drives the PurpleTeam back-end.

I have also created the ability to add testers, There is currently a TLS checker and server scanner stubbed out and ready to be implemented. Feel free to dive in and start implementing.

If there is a tester that you need that PurpleTeam doesn’t have, you can now create it.

Environments

local

The local environment is free and open source. It is also now an OWASP project.

- There’s quite a bit of set-up to do

- You need to set-up all the micro-services

- All the set-up should be documented here. Documentation will be moving to a proper doc site soon.

You will need to set-up the following:

- Lambda functions

- Stage 2 containers

- Orchestrator

- Testers (only app currently)

- Get the purpleteam CLI on your system

- Install it, the options are:

- Configure it and create your Job file

- Run your System under Test (SUT). we use purpleteam-iac-sut to build/deploy our cloud SUTs

- Run the purpleteam CLI

cloud

The cloud environment costs because PurpleTeam-Labs have to maintain the infrastructure that the SaaS runs on, but is the easiest and quickest to get going.

All infrastructure set-up is done for you. You just need to set-up the following:

- Get the purpleteam CLI on your system (same as step 5.1 of

local). Configure the CLI and create your Job file (similar to step 5.2 oflocal) - Run your SUT (same as step 6 of

local) - Run the purpleteam CLI (same as step 7 of

local)

Architecture and Tech

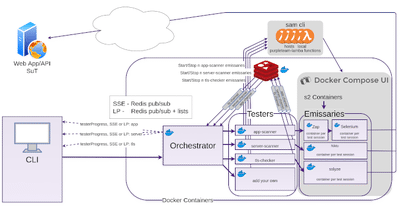

local

Redis pub/sub is used to transfer Tester messages (live update data) from the Tester micro-services to the Orchestrator. The Build User can configure the purpleteam CLI to receive these messages via Server Sent Events (SSE) or Long Polling (LP). The Orchestrator also needs to be configured to use either SSE or LP. With Long Polling (LP) if the CLI goes off-line at some point during the Test Run and then comes back on-line, no messages will be lost due to the fact that the Orchestrator persists the messages it’s subscribed to back to Redis lists, then pops them off the given lists as a LP request comes in and returns them to the CLI. LP is request->response, SSE is one way. In saying that, LP can be quite efficient as we are able to batch messages into arrays to be returned.

Orchestrator

The Orchestrator is responsible for:

- Organising and supervising the Testers

- Sending real-time Tester messages to the CLI via either SSE or LP

- Packaging and sending the outcomes (test reports, test results) back to the CLI as they become available

- Validating, filtering and sanitising the Build User’s input

Testers

Each Tester is responsible for:

- Obtaining resources, cleaning up and releasing resources once the Test Run is finished

- Starting and Stopping Stage Two Containers (hosted on docker-compose-ui) dynamically (via Lambda Functions hosted locally via sam cli) based on the number of Test Sessions provided by the Build User in the Job file which is sent from the CLI to the Orchestrator, then disseminated to the Testers. The following shows two Test Sessions from a test resource Job that we use:

... "included": [ { "type": "testSession", "id": "lowPrivUser", "attributes": { "username": "user1", "password": "User1_123", "aScannerAttackStrength": "HIGH", "aScannerAlertThreshold": "LOW", "alertThreshold": 12 }, "relationships": { "data": [{ "type": "route", "id": "/profile" }] } }, { "type": "testSession", "id": "adminUser", "attributes": { "username": "admin", "password": "Admin_123" }, "relationships": { "data": [{ "type": "route", "id": "/memos" }, { "type": "route", "id": "/profile" }] } }, ... - The actual (app, server, tls, etc) test plan

Sam Cli

Sam Cli stays running and listening for the Tester requests to run the lambda functions which start and stop the Stage Two Containers.

docker-compose-ui

In local docker-compose-ui is required to be running in order to start/stop it’s hosted (Stage Two) containers (it has access to the hosts Docker socket).

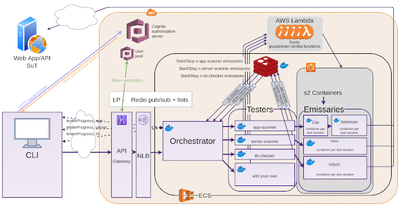

cloud

The cloud environment is similar in terms of functionality, a good number of components are quite different though.

For the Tester messages only Long Polling (LP) is available due to streaming APIs not being supported by AWS API Gateway. We could have used API Gateway WebSockets for bi-directional comms, but that doesn’t support OAuth client-credentials flow, which I had already completed.

When the CLI makes a request to the back-end (directly to the Orchestrator in local, but AWS API Gateway in cloud), first that request is intercepted and a request to the PurpleTeam auth domain is made with: grant_type, client_id of the user pool app client, scopes, client_secret. Cognito Authorisation server returns an access_token if all good. The CLI then makes requests with the access_token to the resource server which in our case is the API Gateway. The resource server/API Gateway validates the access_token with the User pool. If all good, the original request is allowed to continue on it’s way.

Testers run their lambdas, lambdas tell ECS to spin up and tear down n (where n is the number of Test Sessions) stage 2 containers. I originally used AWS ALB but that didn’t support our authentication requirements, so I had to back out and swap it for API Gateway and NLB.

Pressures

Keeping NodeJS Dedendencies up to date

The never ending battle of staying on top of a constantly moving NodeJS ecosystem. Never ending security and feature updates.

This issue has a check list of our last major updates after we finished the IaC for the cloud environment.

Forking/adopting libraries

Then there is the forking and/or rewriting of libraries when authors lose interest, no longer maintain or just no longer have the bandwidth. This must be expected and planned for when consuming free and open source libraries. Yes it’s great to have the head start of being able to just use someone else’s code, but nothing is really free, everything ultimately costs. Just realise that if you are consuming free and open source libraries in your project, then at some stage you are going to have to dive into their code and either help out, or ultimately end up forking or rewriting.

Following are some of the libraries we have forked, ported and/or rewritten:

- mocksse was a rewrite/port of MockEvent. We use this library for mocking Server Sent Events (SSE)

- Cucumber functionality that was removed

- docker-compose-ui has been archived. This means we will have to either fork, rewrite, research to see if we can use something else. This isn’t currently urgent

Competitors

When I started developing PurpleTeam, as part of the business plan creation I needed to list my competitors. There was really only one. Now that competitor has mostly gone away and we have several new ones.

Just to be clear, when I say competitor, I’m talking about Dynamic Application Security Tools for the web that can be used natively in any build pipeline.

Our current competitors are doing things differently to us, with different offerings. We think PurpleTeam has unique aspects that make it stand out from the rest.

Next Steps

PurpleTeam local is now an OWASP project.

Consuming PurpleTeam

How can you start using PurpleTeam today?

As discussed in the Environments sub section you have a few options

local: set everything up yourselfcloud: Sign-up for an account, set-up your test Job, get the CLI on your system

You can use the purpleteam CLI manually or consume it within your build pipelines.

- Manual examples:

- Within your NodeJS app or build pipeline

- Within your non NodeJS app or build pipelines

Contributing to PurpleTeam

- Is PurpleTeam missing something you need that would otherwise allow you to use it?

- Do you need to add a different kind of Tester?

- Have you found a bug?

Ways you can contribute to building #owasp #purpleteam https://t.co/yxdb9XJaIT

— PurpleTeam (@purpleteamlabs) February 20, 2021

As you can see, there are plenty of avenues that you can contribute to:

- Github Discussions

- OWASP purpleteam Slack

- Project Board

- Submit Issue

- Submit PR

- Reporting Security Issues

- Public Roadmap

- CONTRIBUTING.md

PurpleTeam-Labs also has a submission in with Google Summer of Code for students this year. We’ve got plenty to work on, so here’s hoping!

PurpleTeam Next Steps

We will be getting started on a documentation site (not just a hosted doc git repo) soon. We will also be working on a real website. If you have a Dev Team that is keen to try PurpleTeam out, reach out to us if you need to. We are always looking for people to work on the codebase. Even if you’re a student, it’s a great way to learn about security, by coding it.

Say something

Your email is used for Gravatar image and reply notifications only.Subscribe to new blog posts here.

Thank you

Your comment has been submitted and will be published once it has been approved.

Click here to see the pull request you generated.

Comments